Forget the cliché – search engine optimisation (SEO) is dead definitely still exists.

And, for the record, Google can now determine the difference between a website that deserves to rank versus one that shouldn’t, better than ever before!

Long gone are the days of ranking manipulation tactics that aimed to increase keyword positions in search results. Instead, today, Google utilizes its portfolio of “algorithms” and “machine learning programs” to find, digest and display relevant pages of web results that match the need of a user’s search query.

Whether you help a bunch of clients or run your own sites, a deep understanding of how Google works can catapult website traffic and see competitors left behind – the trick is understanding how and why Google chooses to rank websites.

Before jumping into this post, it is important to first comprehensively understand what a search “algorithm” is and how Google works – let us begin.

In simple terms…

A search algorithm is a process or set of rules used by search engines to determine the significance of a web page. Algorithms are used to filter, digest and evaluate web pages to ensure search results match a user’s search query.

As the internet has exponentially grown in size, Google has had to become a data filtering machine. To deliver results that match a user’s search query, Google uses a series of complex rules and procedures (i.e algorithms) to find, filter and digest web pages from across the internet for its own “index”. It is this “index” of web pages that Google uses when displaying relevant search results.

Remember, Google is not the internet and instead should be seen as a “gateway”, helping users to find up-to-date and accurate information time and time again. As the “go-to” search engine, Google dominates the industry, processing in excess of 3.5 billion worldwide searches every day! With so much user demand and potential power to make or break websites and businesses, it is clear that investing the time to co-operate and impress Google’s algorithms is a win-win situation for you or your client’s websites.

Let’s move on to learning more about four of Google’s most-important ranking algorithms and what you can do to get in their good books.

Panda algorithm ?

TLDR: Panda loves to read and digest information. Try to create content that answers user search queries and never copy from others. Grab Panda’s attention with long-form content that’s ten times better than the competition but don’t bulk pages out with “fluff” if there’s no need. If you run an eCommerce site, focus your effort around building high-quality product pages that deserve to rank.

What is Panda?

First released in February 2011, Google’s Panda algorithm creates a webpage “score” based on a range of quality criteria which is mainly focused around content and is still regularly updated. Originally built to behave like a “ranking filter” to sieve sites with plagiarized or thin content from search results, Panda was later incorporated into the core ranking algorithm in early 2016. The algorithm’s content focused metrics have continued to provide an important backbone within Google’s ranking factors that help to provide search results that deliver useful information time after time, keeping Google as the “go-to” search engine on the web.

How does it work?

Just like the animal’s coat and markings, Panda likes to see thick, quality content that is unique. The algorithm understands content duplication on a page by page level (both internally & across other sites) and certainly doesn’t appreciate keyword stuffing. Websites with lots of duplicated pages or poor quality content may receive ranking decreases which in turn will reduce traffic as Google starts to devalue the domain. Pages with high-quality content that answer user search queries should be rewarded with higher ranking positions. However, this is not always the case, especially in online markets where there is lots of competition and other ranking factors are given more precedence such as domain equity and backlinks.

How to make sure you don’t get penalized

Webmasters, SEO managers and website owners can all make a difference when it comes to staying on the right side of Panda. As the algorithm is continually updated, it’s important to always carry out best practice content strategies to protect your website from any future updates. Follow these three content strategies to stay ahead of the game…

- Make sure the content you publish is 100% unique – never “spin” or copy content.

- Don’t create pages with thin or duplicated content – always try to write a minimum of 200 words per page. Writing “some” content is always better than no content.

- In the case where pages with no content can’t be updated with more text, you should consider delisting them from Google’s index to reduce negative exposure. This can be carried out through editing a website’s robots.txt file or editing settings within SEO plugins such as Yoast SEO.

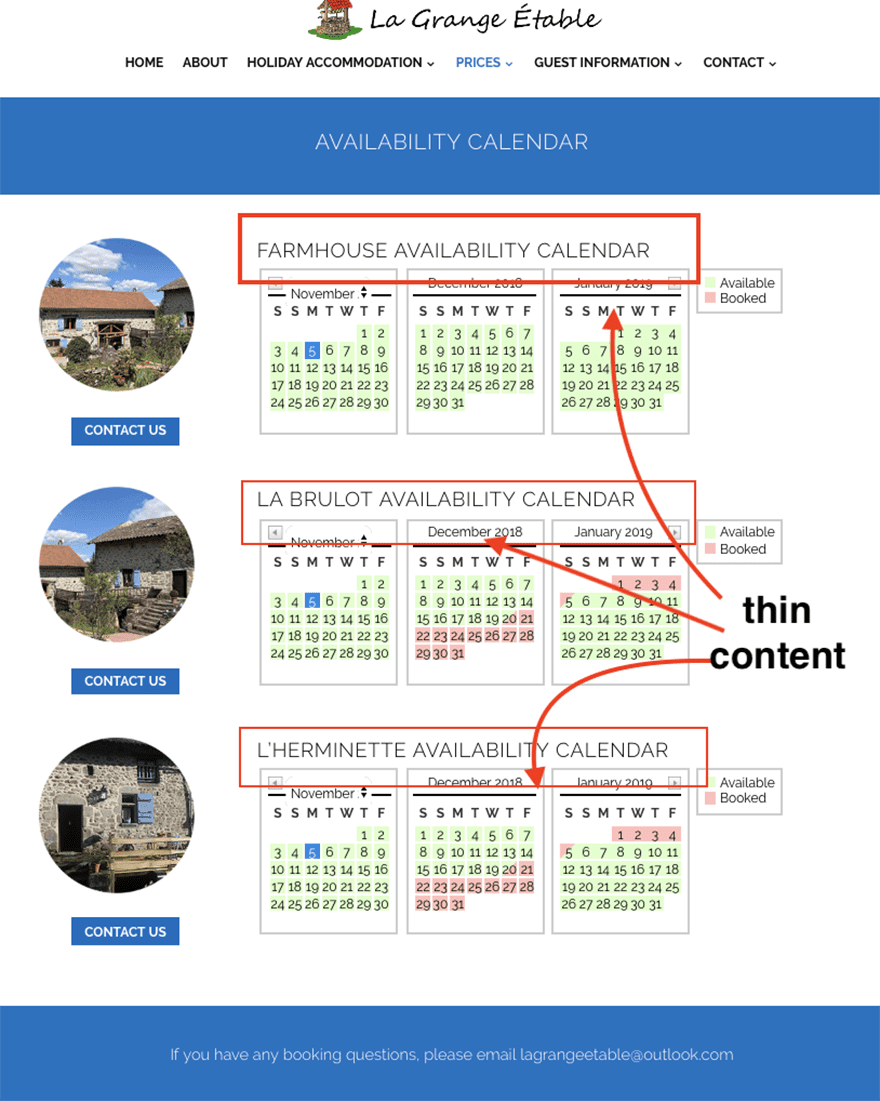

Here’s a great web page example where Panda might be triggered due to there being little to no page content. The page is code-heavy and provides no value being in Google’s index.

Pro tip: For pages where little to no content exists – try incorporating content based around answering common user questions or instead, de-index these pages in your robots.txt file.

Penguin algorithm ?

TLDR: Penguin loves to see trustworthy relationships between sites. Stay out of the way of Penguin’s slap through creating genuine, quality links with others across the internet. Just like in the real world, there is no quick shortcut to building strong relationships. If you are executing link building strategies, be sure to check your website’s backlinks regularly to keep your footprint clean, tidy and natural.

What is Penguin?

First released in April 2012 and later incorporated (like Panda) into the core ranking algorithm in 2016, Google’s Penguin algorithm is a real-time, location and language independent data processing filter that aims to uncover and devalue websites with backlinks that may be deemed manipulative or unnatural. Continual Penguin updates have considerably changed the SEO industry and today, engaging in outdated tactics can leave websites penalized and even delisted from Google’s index. The algorithms sole focus on backlinks now ensures SEO managers and webmasters build and attract high-quality backlinks that are seen as a strong “vote of confidence” between websites.

How does it work?

Just like penguin huddles, the algorithm likes to see trusted “link” relationships around websites. Despite industry noise, high-quality link building is still one of the most important tactics to drive ranking increases and Penguin keeps a close eye ensuring websites do not manipulate the system. Running in real-time, Penguin kicks into action when sites build threatening volumes of links in a short space of time, pay for sponsored links or carry out spammy black-hat techniques. The algorithm also monitors “anchor text” profiles as these can also be cleverly orchestrated to influence rankings. Manipulating Penguin through executing unethical tactics could result in a huge loss of website traffic.

How to make sure you don’t get penalized

In the words of Google – “Avoid tricks intended to improve search engine rankings. A good rule of thumb is whether you’d feel comfortable explaining what you’ve done to a Google employee”. To stay in Penguin’s good books, you should build links that you want to exist and not just because you have a short-term end goal. If you are a link building “newbie” or want to change your ways, remember to always approach link building with the motto of “link earning”. This means building links you would never want to remove.

Noticed a recent drop in traffic or worried about being penalized? Stay calm, you can take action before it’s too late. Paid backlink profiling tools such as Majestic, ahrefs and Link Research Tools will help you evaluate a website’s footprint and highlight areas to improve.

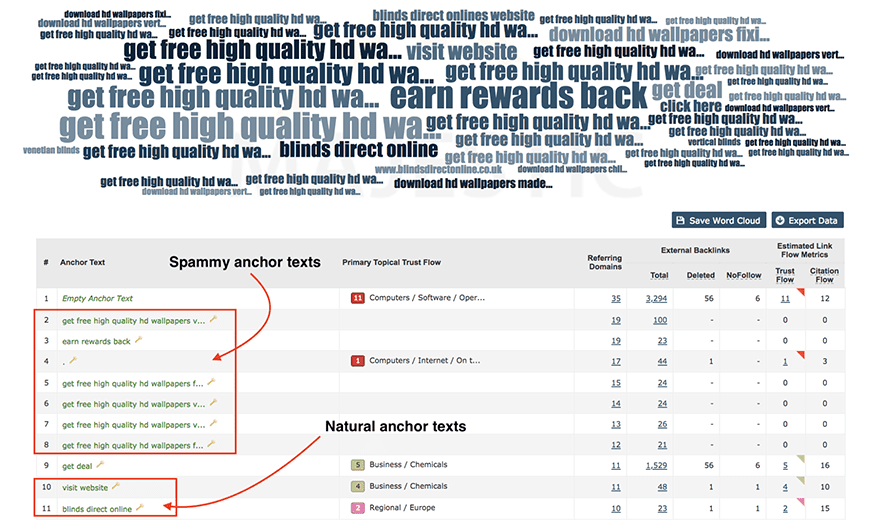

To quickly gauge spammy link levels surrounding a domain, you need to review its anchor text breakdown. Using Majestic’s tool, here’s a great example of a site that looks to have an unnatural top 10 list highlighting lots of spammy links.

Pro tip: Anchor text profiles should be natural and contain mainly branded, product or service related keywords. If you spot spammy or unrelated anchor text in your top 10 list, this could be a cause for concern and should be investigated immediately.

Hummingbird algorithm ?

TLDR: Hummingbird is ready and waiting to feed on your website like sweet nectar. Try attracting attention by creating pages that match the “search intent” of the user. Explore possible synonyms and target longer tail keywords with less competition. Learn from competitors that may be outranking you. If you struggle to write content, answer common customer questions in a natural and engaging way – here’s a great example.

What is Hummingbird?

First released in September 2013, Google’s Hummingbird algorithm significantly changed the way the search engine interpreted user queries and rewards websites who answer a user’s search phrase. The introduction of Hummingbird shifted websites to focus on matching a user’s “search intent” rather than simply trying to rank for a keyword or phrase. The ultimate goal for this brand new algorithm was to help Google better understand the “what” behind a user search query, rather than displaying results based on a broad level, keyword basis. In short, Hummingbird helps users find what they want alongside enabling Google to find, filter and display results that are more precisely focused on the meaning behind a query.

How does it work?

Just like the bird it’s named after, the algorithm is instantly recognisable every time a Google results page is displayed. You can see Hummingbird in action at the bottom of Google where other “theme-related” results are shown that do not necessarily contain the keywords from the original search query. Hummingbird is not a penalty-based algorithm. Instead it breaks down long, conversational queries to unpick the “intent”, takes into consideration wider website relevancy of each search, and rewards sites that use natural synonyms and long-tail keywords. Hummingbird not only understands how different web audiences behave, the algorithm can also quickly recognise what a searcher is looking for, through displaying related suggestions in the search box prior to displaying results.

For unrecognized search queries (did you know – around 15% of all daily Google searches have never been searched before!?) Google harnesses the power of it’s artificial intelligence (AI) algorithm – Rankbrain. Released in April 2015, this machine learning supercomputer not only deciphers the “intent” behind new queries, it also filters the displayed results accordingly. You can read more about Google’s Rankbrain algorithm here.

What can I do to catch its attention?

Ultimately, website’s that implement a cross-combination of the algorithms criteria should see significant ranking increases overtime. If your site is currently ranking poorly within your niche, there is a high chance that Hummingbird has some influence over your positioning. Try to build strong “Hummingbird friendly” foundations at the start of any digital project as this will pay dividends. This can include; incorporating conversational answers to questions within content (here’s a great example of this), including synonyms and targeting long-tail keywords or phrases. To find synonyms, Ubersuggest is a great place to start. Furthermore, Google’s related search results are always worth looking at and don’t forget to check out the competition that’s ranking above you.

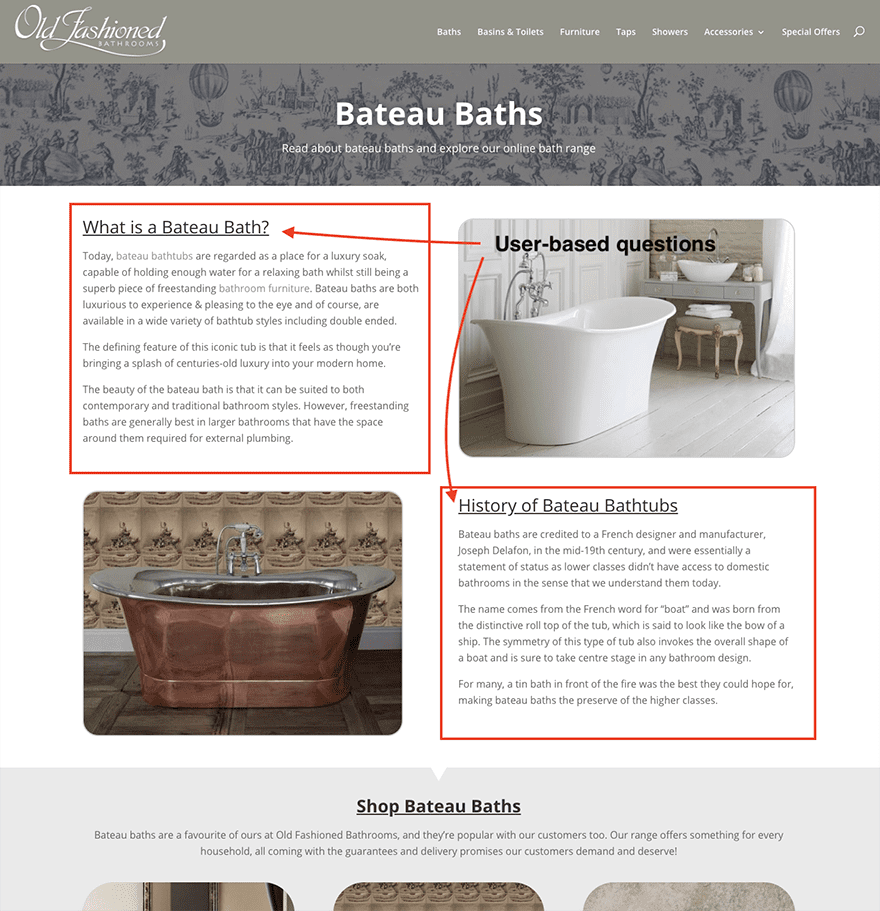

Here’s a great example of an eCommerce website that generates content based around common customer questions. This helps Hummingbird to understand website relevancy.

Pro tip: Before writing or creating new web pages, answer the following questions: what is the purpose of this page? What is it trying to target? Scoping the purpose early gives time to research possible synonyms, long tail keywords and to explore the competition.

Pigeon algorithm ?

TLDR: Pigeon is ready to deliver better local results when you start feeding it. Take advantage of Google My Business and get listed in some genuine and trusted online local directories such as Yelp. When creating content, try to use text and imagery that is distinctively associated with a location or area. If you run a service based business, make sure you are generating user reviews – this will help you stand out from the local crowd.

What is Pigeon?

First released in August 2013, Google’s Pigeon algorithm was launched to provide better results for local searches. Prior to Pigeon, Google’s local search results produced a mixed bag of information and an update was needed to incorporate both location and distance as important factors when displaying results. Subsequently, Google reduced the number of displayed local business results from 7 to 3, making local exposure even tougher. However, the algorithm effectively combined Google search results with Google Map searches and set in motion a more cohesive way for websites to rank organically for local searches.

How does it work?

Just like the navigational ability of homing pigeons, the algorithm relies heavily upon gathering data on a user’s location and distance before displaying search results (this data must be shared with Google otherwise results are based on keywords only). Pigeon is a great example of Google in a “mobile-first” world, striving to deliver relevant search results during every possible interaction. Although it is not possible to manipulate where potential customers may use Google, Pigeon now takes more notice of local directory listings, reviews and local reputation when ranking results. This algorithm does not penalize websites, instead it focuses on giving prominence to those sites that deserve to be listed locally.

Note: Pigeon is only currently affecting search results in the English language.

How to make sure you get noticed locally

Pigeon loves to see local relationships and rewards data consistency across the internet. It is important to focus on foundational SEO that incorporates location based keywords and be sure to take advantage of Google My Business listings. If you want to really impress Pigeon, work on building high-quality directory listings with consistent data, and work hard to establish positive user-generated reviews. With so many local businesses now heavily relying on Google to drive foot traffic and sales, a deep understanding of this algorithm will help carve some much-needed space between the local competition.

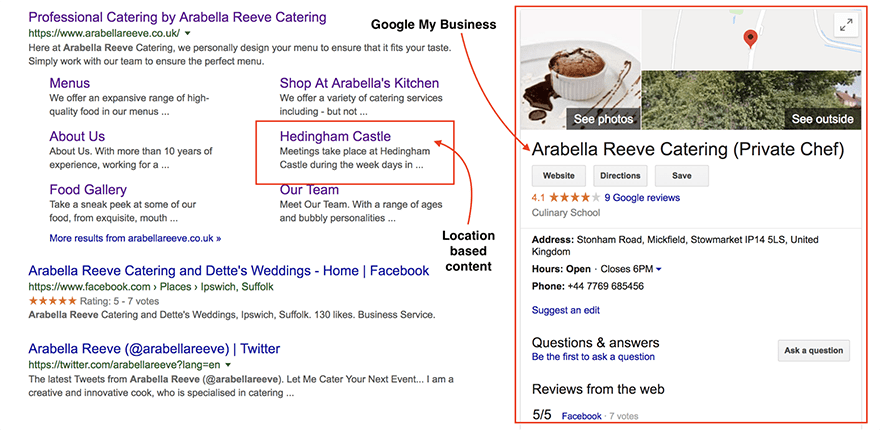

Here’s a great example of a local business that has taken advantage of Google My Business and publishes content based around local keywords. This helps Pigeon to understand the location of potential customers.

Pro tip: For websites who heavily rely on local traffic, it’s important to treat local SEO much like your local reputation. Remember to provide the same data across the internet, be serious about reviews, and help the Pigeon algorithm to understand your audience location with targeted content.

Conclusion

Gaining a deeper understanding of how Google ranks websites helps you spend time in the areas where change can most impact performance. It’s important to remember that increasing website rankings is a long process that requires strategic guidance, and meeting the criteria of Google’s algorithms can sometimes be a tricky process. However, at the same time, aligning with the expectations of each algorithm self-generates the strategy needed to succeed online. Executing tactics that aim to impress Google’s algorithms is always the best strategy as they ultimately create the rules that can make or break any future achievements.

It’s inevitable that Google will be forever updating and tweaking their algorithms. Even as the most dominant internet search engine, Google continually rocks the boat to disrupt the SEO industry, improve search results and to stay ahead of its own competition. This is because the moment a user does not get the answer they are looking for, they may quite possibly use another search engine. Over time, if searches on Google decline, so does their huge revenue from Google Ads, so it’s in the search engine’s best interest to maintain and improve search results on an ongoing basis.

The four algorithms covered in this post are only part of Google’s ranking algorithms. In total, there are over 200 ranking factors that are examined across a wide range of sophisticated algorithms and machine learning programs. It is ultimately these algorithms that decide where a web page should rank in search results. Continual updates from both technical and behavioral perspectives requires the essential need, to stay updated overtime.

If you’re interested in keeping up to date with Google’s latest algorithm updates and making sure your client’s or your own sites climb the search rankings and avoid potential traffic losses, here are a few resources to bookmark:

- MOZ Google Updates – displays updates stretching back to the year 2000

- SEMRUSH Sensor – shows a daily graph of search ranking velocity

- Search Engine Land – highlights Google news, updates and press releases

hi, thank you for the great information.

Thank you

Thanks you so much for sharing

Thanks Mike, this article was really informative.

Cheers for your comment. Glad the post was useful to you ?

Well explained! Thank you for sharing this article. It’s of real worth.

Thanks Roshni ? Glad you enjoyed the blog post.

Very well taught. This is the must-read for beginners as well as professionals. Many of my own concepts got cleared out of this. I have a little question from Google disavow option, I ll go ahead if you allow me to ask, Thanks.

Thanks Anurag! Hope the post has helped focus your SEO efforts ? For disavow advice, read this great post by Moz: https://moz.com/blog/guide-to-googles-disavow-tool

Hi Mike, great summary of these 4 algorithms. I was quite familiar with Panda and Penguin but didn’t know so much about Hummingbird or Pigeon so it was interesting to learn a bit. Whilst it is good to understand the algorithms I think some of the biggest issues for website owners come about when they pay third parties to carry out work, whether it be link building or content creation. If you pay the wrong third party and they cut corners then you could come unstuck.

Cheers for your comment Mike! For SEO to truly impact a business, it needs to be seen as a strategy rather than a ‘tick in a box’ – this only happens when a ‘head of digital’ sits alongside other top-level management roles. When 3rd parties are used, it’s very important to work together rather than leaving an external team to do it for you. A foundational knowledge about digital marketing will pay dividends when a 3rd party starts to talk tactics!

I found this very helpful. Lots to learn, but you did a great job writing it! Thank you!

Cheers Nelson! Glad you enjoyed the post ?

Love this article. Lots to learn. Thanks Mike!

Its Very Knowledgeable Post. This Tips are very helpful. Thank You…!!

Thanks for your comment! All the best implementing the ‘pro tips’ ?

I love these posts, I’d love to get regular updates.

Thanks for your comment! To keep updated about algorithm changes, bookmark the websites at the bottom of the post ?

That’s lovely, Really useful post, 4 important Google Ranking algorithms in one post with details description. Loved it. Thanks, Mike McManus!

No problem – thanks for your comment! Glad you enjoyed reading the post ?

Thank you, Mike! SEO does definitely exist, just need some patience for the results to show up. Keep up the good work, i look forward to more.

Thanks Jenny! SEO sometimes gets labelled as a ‘waste of time’ but this is certainly not true. SEO should be at the heart of every digital marketing strategy ?

Thank you for this inspiring piece, Mike! I couldn’t have come across it at a more perfect time, SEO does definitely exist, just need some patience for the results to show up. Keep up the good work, i look forward to more of your articles.

I really loved this post. I have started my online business and these tips will gonna help me a lot in my business. Thanks, a lot Mike…

Cheers James! All the best with your new digital ventures ?

Hi Mike,

Love your post, Thanks for sharing the detailed guide on google ranking algorithms. It will really help people like to who just started a journey in digital marketing. Keep sharing this kind of good work with us.

Thanks Emma! Digital marketing can sometimes be very confusing but understanding how Google works will give you a huge head start against your competition ?

Great lesson

Thanks for your feedback Pablo! Hope the post has given you some ideas on how to improve your site ?

Thanks for sharing this article. This article is very helpful for beginners. I am not get 1 rank for my website but increase my website traffic 2 times. Good Job.

Thanks for your comment! I hope the post has given you some relevant tips that you can implement to increase your rankings ?

I think, may be simply and better thing, create a post or a page with good faith, honesty, passion, and competence. In the end, the algorithms look for this. Know them and exploit them, it seems almost a desire to deceive them. Or not? 🙂

Thanks for your comment Antonio! Impressing Google’s algorithms will help you when competition is tough ?

Hi,

Thanks for tell about the google algorithms and how the google algorithms help into get traffic in google search engine and how google panalized the websites now i will make sure before work that i will not get spam or panalized by google.

Thanks for your comment! Try to impress Google wherever you can alongside creating websites and content for users ?

Thank you for the post. That was very helpful and explains why my generic website is not generating any traffic. Thank you for the tips. I will be making some changes to my content.

Appreciate your comment Belinda! Glad the post has given you some ideas to implement that will help boost your organic traffic ? Remember, SEO is never complete – it is simply a continual process of tweaks and updates overtime that aim to help improve rankings.

Great info, in well said manner, Very Much useful for not only the novice but also for the experienced guys who want the info of all of the google updated in precise manner.

Though its missing “Mobilegeddon” in 2015 and then Mobile Friendly in 2016, which is very very important to mention as more than 70% queries are coming from Mobile only.

In which google says that Mobile results can be different and gives priority to mobile user experience.

Thanks for your comment Sandlus! Mobile user experience is also extremely important to keep in mind. However, for this post, I wanted to concentrate on lesser-known algorithms that people may never have heard of before. The post could have been 15,000 words long if it included all important updates ?

Thanks for your comment Sandlus! Mobile user experience is also extremely important to keep in mind. However, for this post, I wanted to concentrate on lesser-known algorithms that people may never have heard of before. The post could have been 15,000 words long if it included all important updates ?

Very informative, thanks Mike!

Cheers for your comment Anthony! Hope the post has given you some useful tips to implement ?

Hello, Mike, it was a really a nice article now I can save my WordPress site from getting penalized. This article was really helpful for me.

Thanks for your feedback Gavin! With so many Google updates, it’s very important to keep in mind the different areas of your site that could get penalised by Google.

Mike – I agree with so much of this… SEO certainly isn’t dead especially as we see the cost of online advertising constantly going up-and-up. You hit the nail on the head with duplicate content, which is rife on large e-commerce sites where everyone simply copies the manufacturers descriptions and thinks that is good enough! Also I never knew that stat about ‘unrecognized search queries’ – fascinating and helpful article, many thanks…

Thanks for your comment Rich ? As you have mentioned, with the rising costs of online advertising, it only takes a few competitors to have a war and you could be pushed out of the market if you have limited budgets – that’s why it is very important to have multiple pillars of traffic. Taking the time to be unique online ALWAYS pays dividends in the long run!

This blog has a lot of useful content, but this article is a true standout in my personal opinion. This is an extremely comprehensive and useful post and I will happily recommend it. Thank you very much for this, Mike! 🙂

Cheers Oliver! Thanks for your recommendation. The way Google ranks websites in search results can seem very complex but it’s important to keep their algorithms in mind when writing content or building websites.

Superb article great to read these kind of knowledge

Appreciate the feedback Jenny! Thanks for reading this post.

Impressive. always something new. Great efforts

Thanks Justin! I hope the post has given you some useful and actionable tips ?

Mike, Thanks for sharing this insightful article on Google’s Ranking Algorithms… I’ve been searching for this algorithms for long and your article just fulfilled my desire.

Thanks John! To stay updated, don’t forget to follow the links at the bottom of the post. Google release updates all the time ?

I can always count on the Elegant Themes Blog to provide useful information.

Thanks for the useful information on the various Google algorithms. Also, the suggestions and Pro Tips….

Thanks for your comment Frank! Glad you enjoyed the post and I hope it has given you valuable insight on how to impress Google’s algorithms ?

Awesome, really awesome this compressed summary of a complex topic.

100% useful >> thanks for that Mike.

Cheers Eric

Cheers Eric! Hopefully the ‘pro tips’ will help you drive more organic traffic to your website. Don’t forget, for every website visitor, Google has lots of ‘bots’ who like to explore your site too.

Pigeon is only available in English. Pigeon English will be fully utilised on my next site. Should do wonders for the owl SEO. ; )

Google did release an ‘Owl’ update back in 2017 that targets fake news results! I wonder when they will run out of animal names ?

Mike,

Thank You very much for this thorough explanation! Very useful indeed.

Anit.

Thanks Anit – I hope the post helps you to drive more organic traffic ?

A very insightful and helpful post! I am going to try writing for ‘search intent’ to see if I can generate some free traffic. Thanks Mike ??

Thanks for your comment Freya! When writing for ‘search intent’ always start by trying to picture yourself as the visitor. Google related suggestions are a great place to gather some insightful keywords to use throughout your content.

Wow, Thank you Mike,

Your article about A Beginner’s Guide to Google’s 4 Most-Important Ranking Algorithms is the best explanation I have ever seen compiled into one post.

You have explained it in such a comprehensive yet simple way that I believe even the most challenged and absolute beginner will be able to understand.

I’m not new to this however I thoroughly enjoyed your explanation.

Thank you so much for this.

Your prose are insightful and noteworthy as an Elegant themes member I am glad to have access to your input. I would like to make a suggestion if I may. It would be fabulous if members could also download a PDF version of your article. So one could review your suggestions while working on the back end of their sites.

Phil just right click, hit print (as PDF).

A PDF download is a great idea Phil! I will ask the Elegant Themes team ?

Thanks for your comment Liz! Super glad you enjoyed the post 🙂 The way Google decides to rank websites can get very confusing but it’s essential to understand the basics when trying to grow an online business.